Content creation has a "Production Bottleneck." You have 50 ideas for videos, but you only have time to film 2. The setup, the lighting, and the retakes kill your momentum.

Argil promises to break this bottleneck. It allows you to train a "Digital Twin"—an AI avatar that looks and sounds exactly like you. You type the script, and the AI generates the video. But can it fool a real audience on TikTok or LinkedIn? In this Argil review, we cloned ourselves to find out.

Table of Contents

ToggleQuick Summary

The "Camera Killer"

Argil isn't just about making corporate training videos (like HeyGen). It specializes in dynamic, social-first content. The avatars have natural head movements and the lip-sync is surprisingly accurate. If you want to post daily without filming daily, this is the tool.

Clone Yourself for Free →

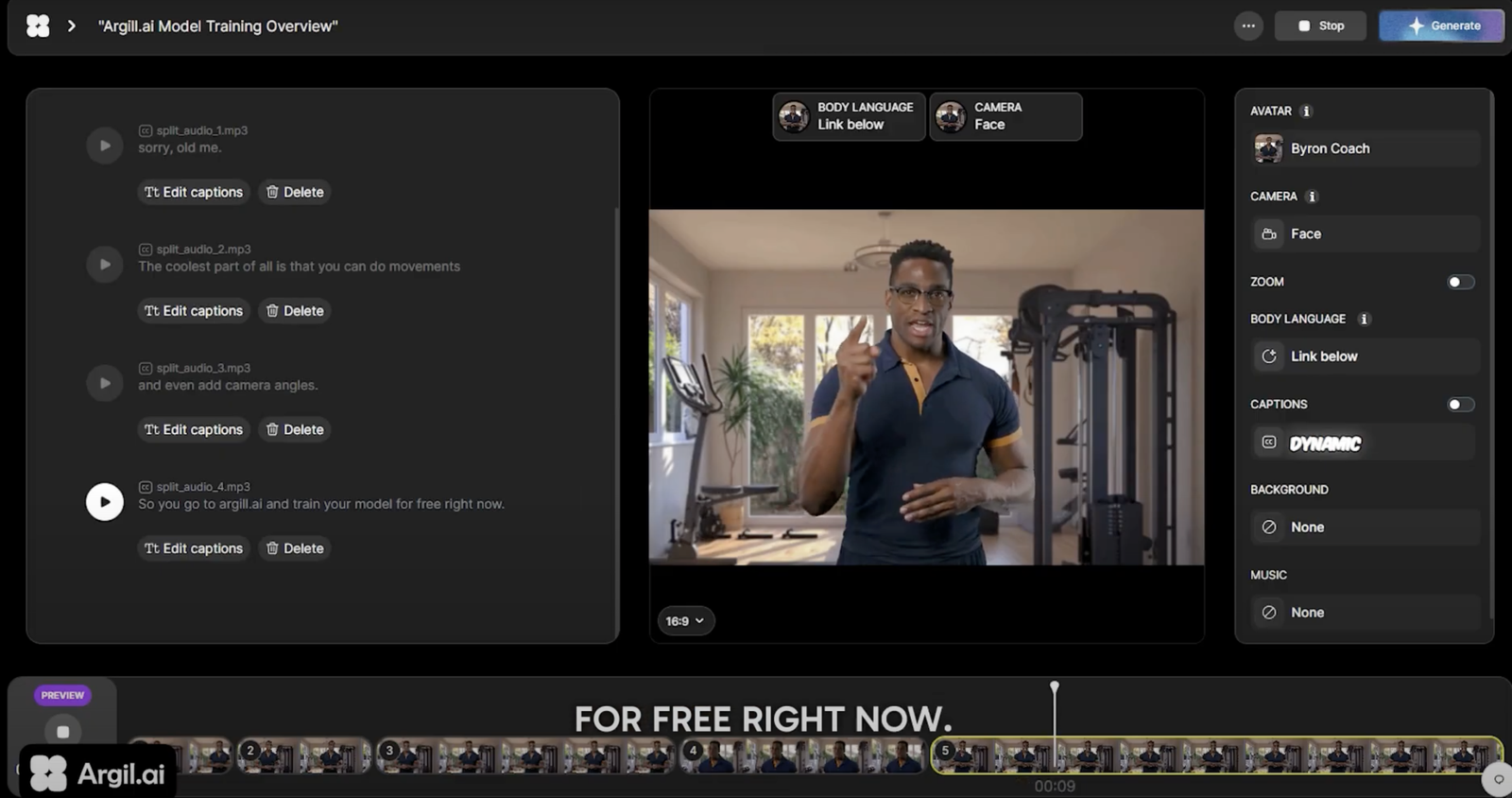

What Argil Actually Does

Argil allows you to upload a 2-minute video of yourself talking to a camera. It analyzes your face, voice, and mannerisms.

Once trained, you can type any text into the dashboard, and Argil generates a video of "You" saying that text. It's not a generic stock avatar; it's your face and your voice. It also handles auto-captions and B-roll insertion automatically.

Core Features

How to Use Argil — The Clone Test

We put the "Digital Twin" to the test.

- The Training: We recorded a 2-minute video: "Hi, I'm Ajit. I'm looking at the camera, pausing, and smiling." Good lighting is crucial here.

- The Processing: We uploaded the file. Argil took about 10 minutes to "train" the model.

- The Generation: We typed a script about "The Future of AI Tools."

- The Result: The output was shocking. The eyebrows moved correctly. The voice had my cadence. The only "tell" was a slight blur around the mouth during fast speech, but on a mobile screen, it was invisible.

Example Use Cases

Who Argil Is Best For

- Content Creators: Who want to scale volume but are limited by time.

- Introverts: Who hate the process of filming but want the benefits of video marketing.

- Agencies: Who want to offer "CEO Video Updates" as a service to clients.

Who Should Avoid Argil

- Cinematographers: If you need cinematic depth of field and dynamic camera movement, AI isn't there yet. The camera is static.

- Emotion-Heavy Acting: If the script requires crying or screaming, the AI face will look unnatural. It's best for "talking head" info.

Pricing & Credits

Argil operates on a credit system based on video minutes generated.

| Plan | Price (Approx) | Includes |

|---|---|---|

| Starter | ~$29 /mo | 10 Mins of Video / 1 Custom Avatar |

| Creator | ~$79 /mo | 40 Mins of Video / 3 Custom Avatars |

| Agency | Custom | Unlimited Avatars / API Access |

How Argil Compares (vs HeyGen)

| Feature | Argil | HeyGen | Synthesia |

|---|---|---|---|

| Vibe | Social / Dynamic | Corporate / Static | Training / Slide |

| Training Speed | Fast | Medium | Slow |

| Hand Gestures | Good | Limited | Static |

| Price | Competitive | Expensive | Expensive |

Limitations & Reality Check

- The "Uncanny Valley": It is 95% there. But occasionally, the eyes might not blink at the perfect moment. It's best used for short-form content (under 60s) where the viewer doesn't have time to scrutinize.

- Hand Movements: While better than competitors, the hands can sometimes clip through the body or look repetitive.

Best Practices: "The Pattern Interrupt"

Hide the AI flaws with editing.

Pros & Cons

- Incredible time-saver for high-volume creators.

- Training process is simple (no tech skills needed).

- Output quality is high enough for TikTok/Shorts.

- Multi-language support expands your audience.

- Subscription cost is monthly (recurring expense).

- Still lacks the "soul" of a passionate human performance.

- Requires high-quality input footage for good results.

Frequently Asked Questions

Is it safe? Can someone steal my face?

Argil has strict security protocols. You must perform a "Live Verification" (nodding, turning head) to prove you are the person in the video before training starts. This prevents unauthorized cloning.

Does it work for YouTube Long Form?

It's possible, but not recommended for 10-minute videos. The lack of dynamic movement gets boring. It shines for 30-90 second updates.

Can I change my clothes?

No. The avatar wears whatever you wore in the training video. If you want different outfits, you need to train multiple avatars (e.g., "Casual Ajit" vs "Suit Ajit").

How long does rendering take?

Rendering is fast—typically 1 minute of video takes about 2-3 minutes to process.

Final Verdict

If you are building a personal brand on LinkedIn or Instagram but struggle to find time to film, Argil is a superpower.

It effectively "clones" your presence, allowing you to be in two places at once. While it won't replace a high-end studio production for a documentary, it is the perfect tool for the daily content grind.

Train Your Avatar Now →Reviewed by Ajit

Founder & Growth Engineer. I test software APIs, run live campaigns, and inspect the code so you don't have to.

Connect on LinkedIn →